Model Context Protocol (MCP) finally gives AI models a way to access the business data needed to make them really useful at work. CData MCP Servers have the depth and performance to make sure AI has access to all of the answers.

Try them now for free →Visualize Azure Data Lake Storage Data in TIBCO Spotfire through OData

Integrate Azure Data Lake Storage data into dashboards in TIBCO Spotfire.

OData is a major protocol enabling real-time communication among cloud-based, mobile, and other online applications. The CData API Server provides Azure Data Lake Storage data to OData consumers like TIBCO Spotfire. This article shows how to use the API Server and Spotfire's built-in support for OData to access Azure Data Lake Storage data in real time.

Set Up the API Server

If you have not already done so, download the CData API Server. Once you have installed the API Server, follow the steps below to begin producing secure Azure Data Lake Storage OData services:

Connect to Azure Data Lake Storage

To work with Azure Data Lake Storage data from TIBCO Spotfire, we start by creating and configuring a Azure Data Lake Storage connection. Follow the steps below to configure the API Server to connect to Azure Data Lake Storage data:

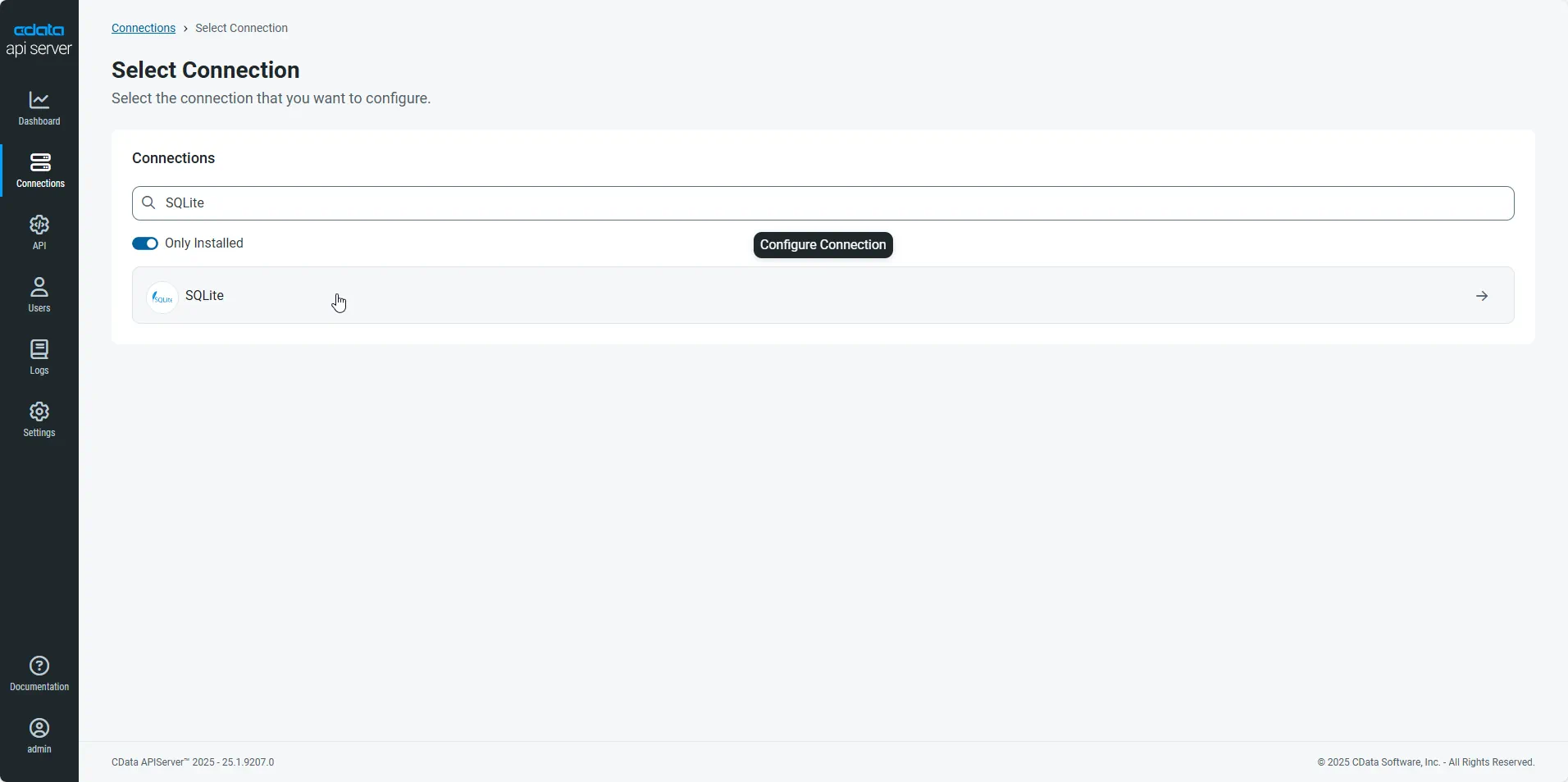

- First, navigate to the Connections page.

-

Click Add Connection and then search for and select the Azure Data Lake Storage connection.

-

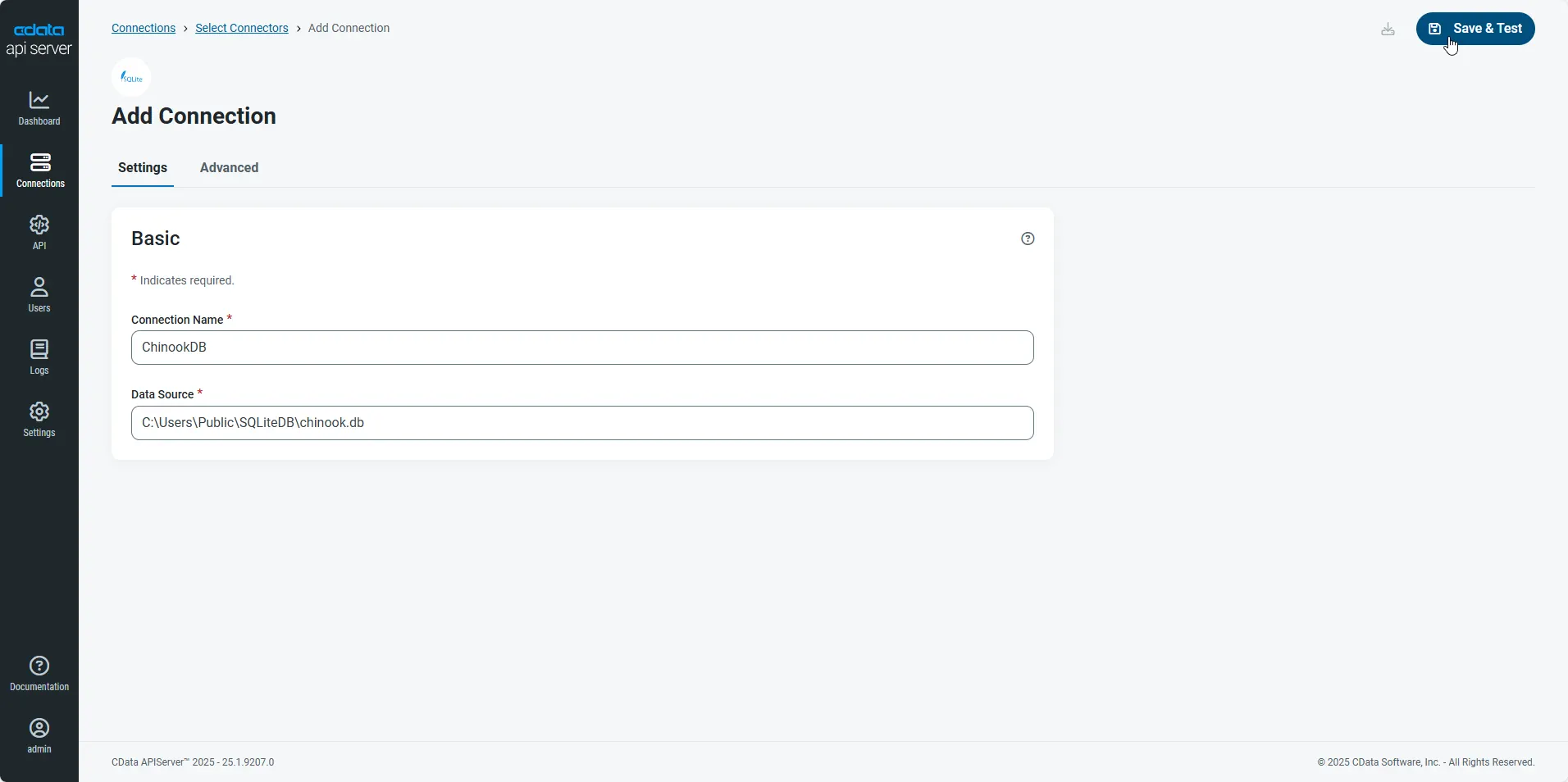

Enter the necessary authentication properties to connect to Azure Data Lake Storage.

Authenticating to a Gen 1 DataLakeStore Account

Gen 1 uses OAuth 2.0 in Entra ID (formerly Azure AD) for authentication.

For this, an Active Directory web application is required. You can create one as follows:

To authenticate against a Gen 1 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen1.

- Account: Set this to the name of the account.

- OAuthClientId: Set this to the application Id of the app you created.

- OAuthClientSecret: Set this to the key generated for the app you created.

- TenantId: Set this to the tenant Id. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

Authenticating to a Gen 2 DataLakeStore Account

To authenticate against a Gen 2 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen2.

- Account: Set this to the name of the account.

- FileSystem: Set this to the file system which will be used for this account.

- AccessKey: Set this to the access key which will be used to authenticate the calls to the API. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

- After configuring the connection, click Save & Test to confirm a successful connection.

Configure API Server Users

Next, create a user to access your Azure Data Lake Storage data through the API Server. You can add and configure users on the Users page. Follow the steps below to configure and create a user:

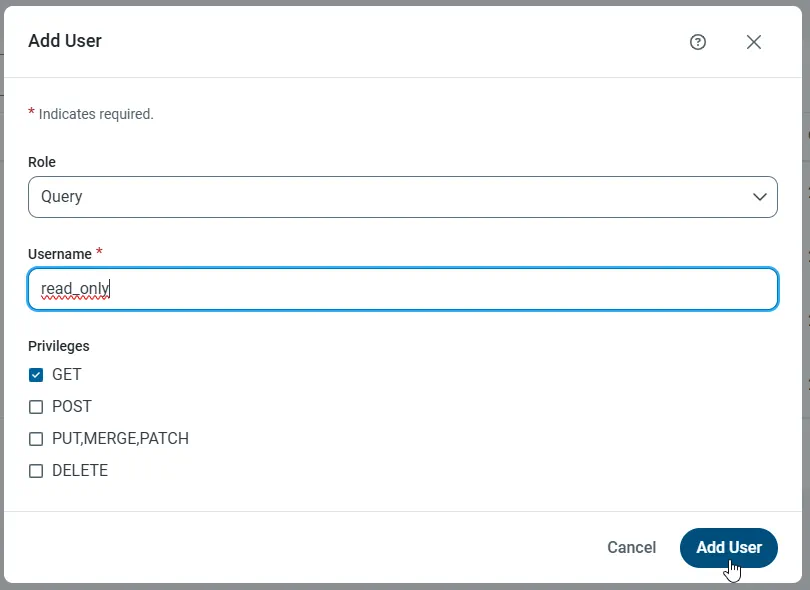

- On the Users page, click Add User to open the Add User dialog.

-

Next, set the Role, Username, and Privileges properties and then click Add User.

-

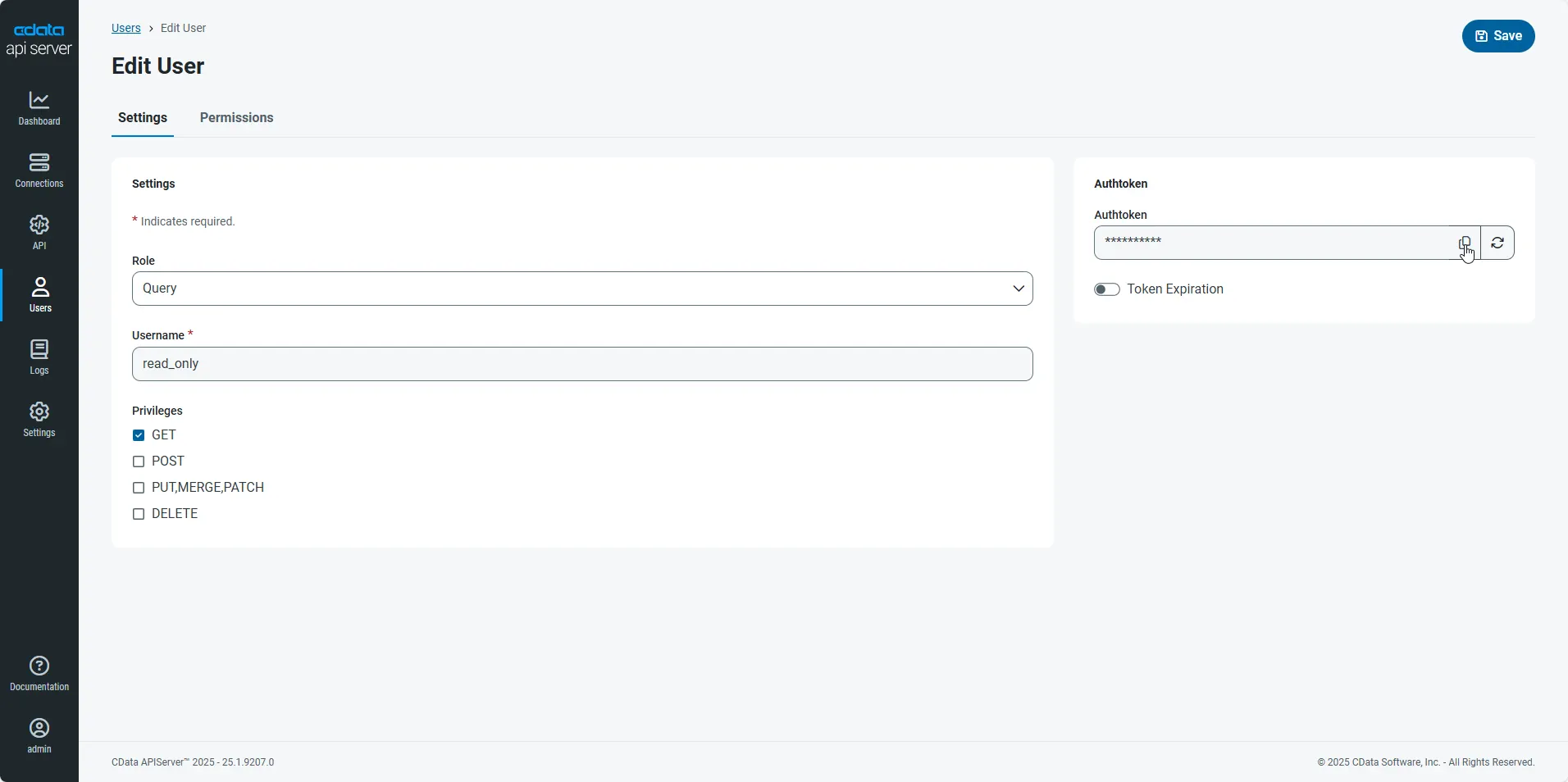

An Authtoken is then generated for the user. You can find the Authtoken and other information for each user on the Users page:

Creating API Endpoints for Azure Data Lake Storage

Having created a user, you are ready to create API endpoints for the Azure Data Lake Storage tables:

-

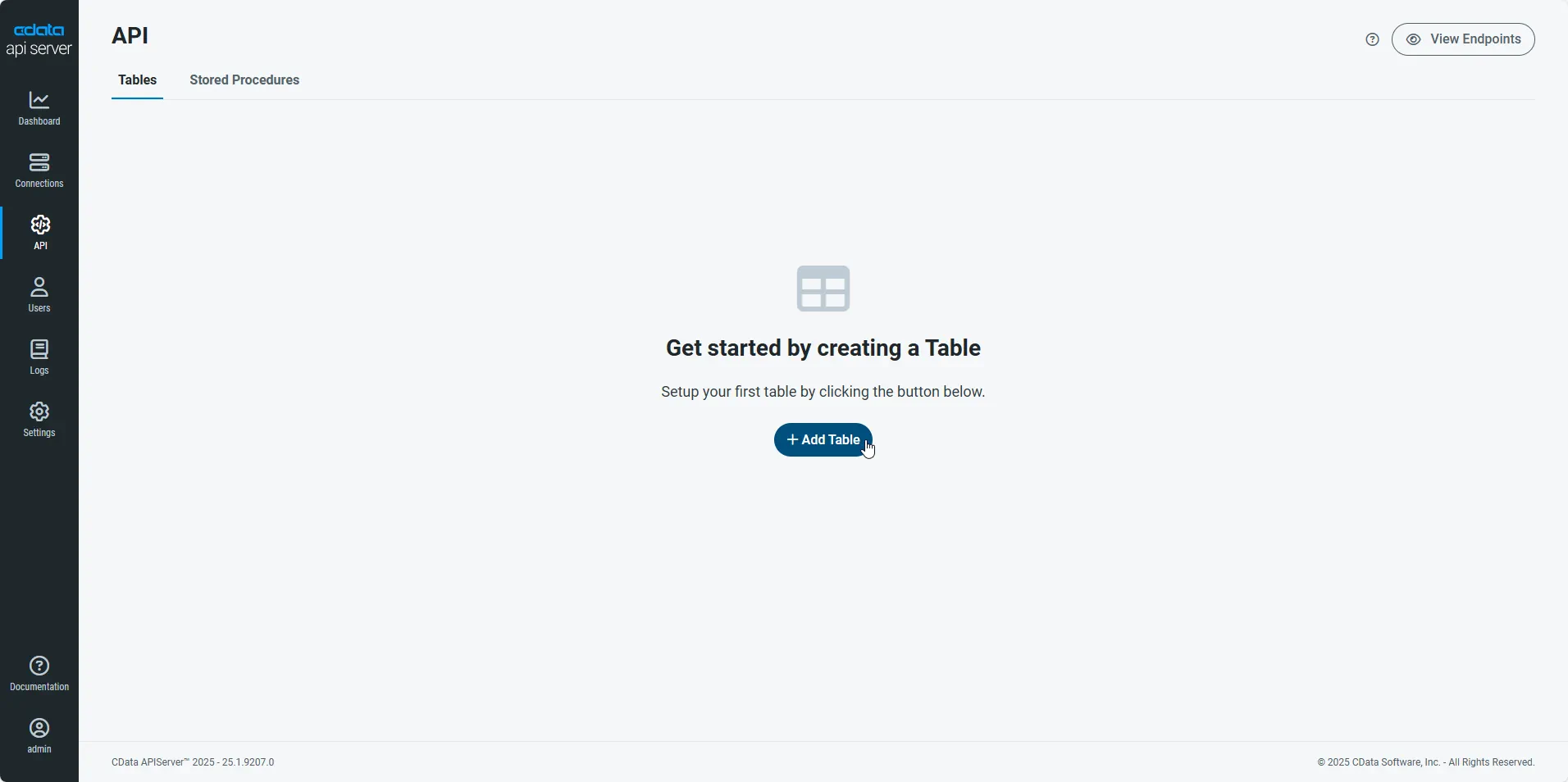

First, navigate to the API page and then click

Add Table

.

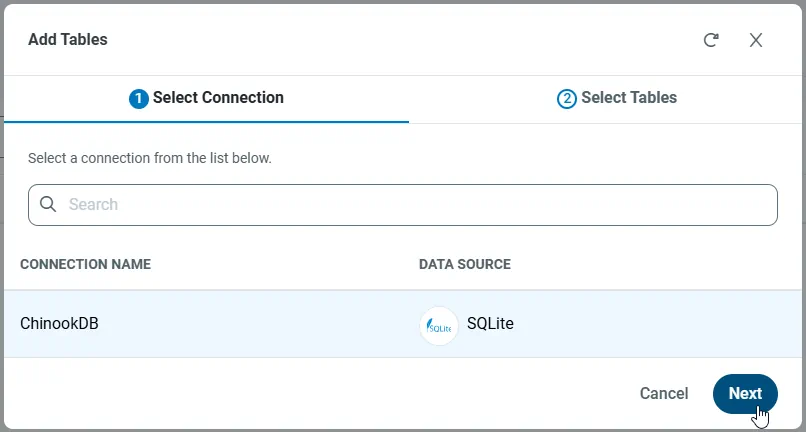

-

Select the connection you wish to access and click Next.

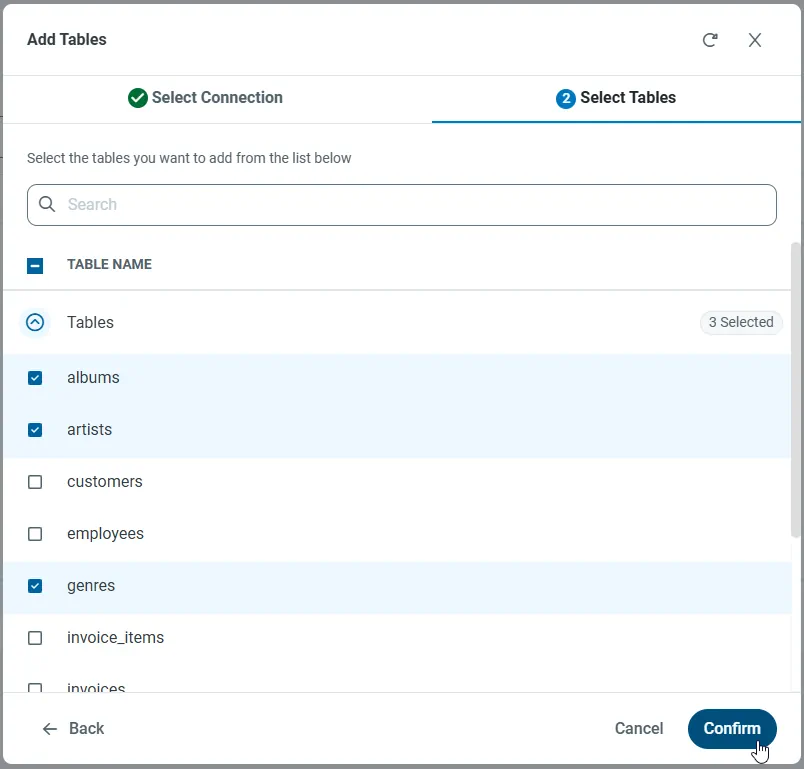

-

With the connection selected, create endpoints by selecting each table and then clicking Confirm.

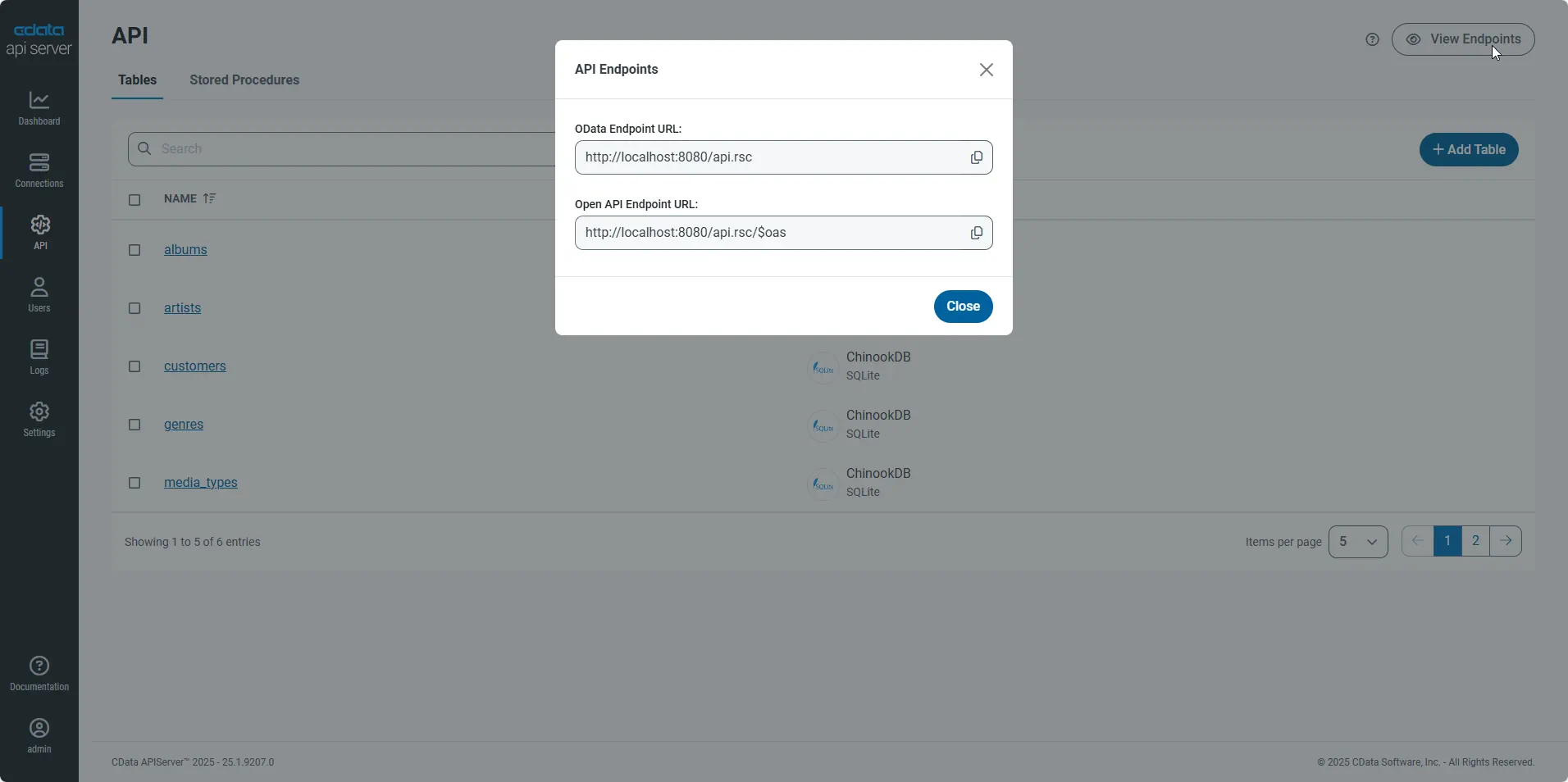

Gather the OData Url

Having configured a connection to Azure Data Lake Storage data, created a user, and added resources to the API Server, you now have an easily accessible REST API based on the OData protocol for those resources. From the API page in API Server, you can view and copy the API Endpoints for the API:

Create Data Visualizations on External Azure Data Lake Storage Data

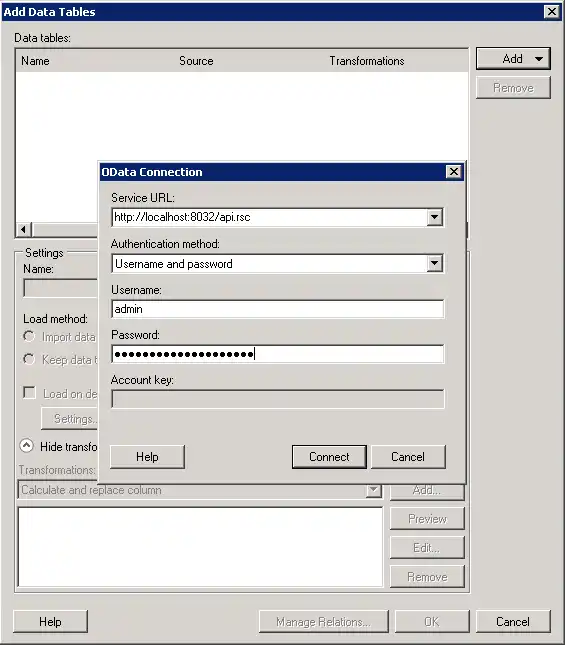

- Open Spotfire and click Add Data Tables -> OData.

- In the OData Connection dialog, enter the following information:

- Service URL: Enter the API Server's OData endpoint. For example:

http://localhost:8080/api.rsc

- Authentication Method: Select Username and Password.

- Username: Enter the username of an API Server user. You can create API users on the Security tab of the administration console.

- Password: Enter the authtoken of an API Server user.

- Service URL: Enter the API Server's OData endpoint. For example:

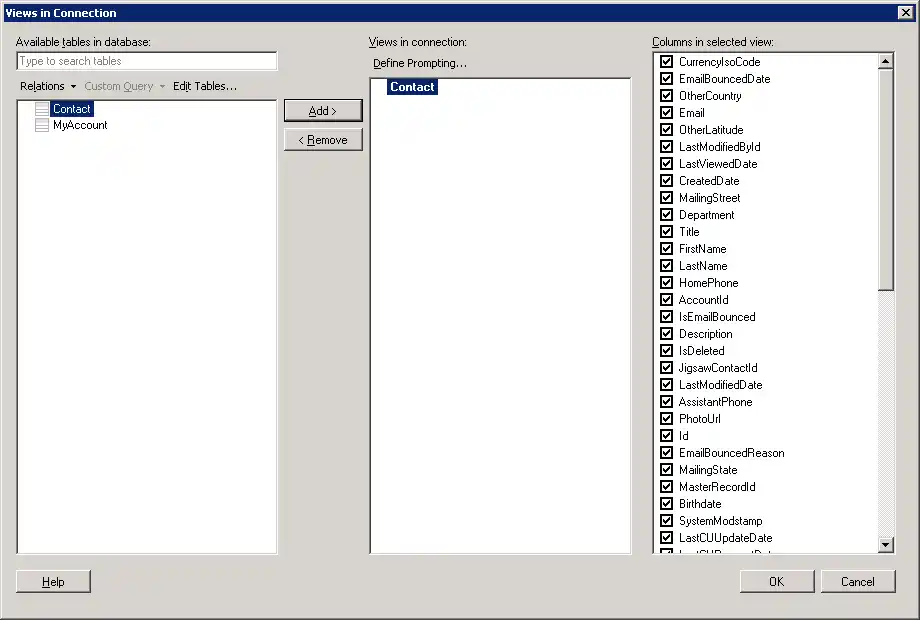

- Select the tables and columns you want to add to the dashboard. This example uses Resources.

- If you want to work with the live data, click the Keep Data Table External option. This option enables your dashboards to reflect changes to the data in real time.

If you want to load the data into memory and process the data locally, click the Import Data Table option. This option is better for offline use or if a slow network connection is making your dashboard less interactive.

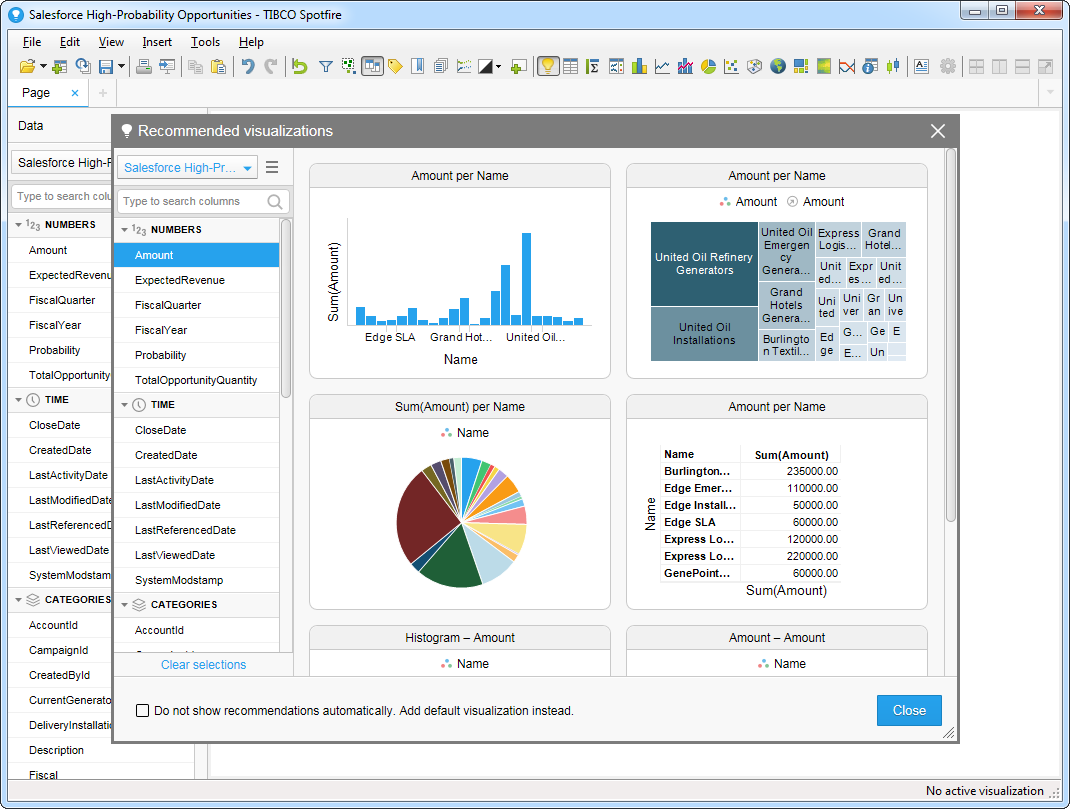

- After adding tables, the Recommended Visualizations wizard is displayed. When you select a table, Spotfire uses the column data types to detect number, time, and category columns. This example uses Permission in the Numbers section and FullPath in the Categories section.

After adding several visualizations in the Recommended Visualizations wizard, you can make other modifications to the dashboard. For example, you can apply a filter: After clicking the Filter button, the available filters for each query are displayed in the Filters pane.