Model Context Protocol (MCP) finally gives AI models a way to access the business data needed to make them really useful at work. CData MCP Servers have the depth and performance to make sure AI has access to all of the answers.

Try them now for free →Automated Continuous Elasticsearch Data Replication to OneLake in Microsoft Fabric

Use CData Sync for automated, continuous, customizable Elasticsearch data replication to OneLake in Microsoft Fabric.

Always-on applications rely on automatic failover capabilities and real-time data access. CData Sync integrates live Elasticsearch data into your OneLake instance in Microsoft Fabric, allowing you to consolidate all your data into a single location for archiving, reporting, analytics, machine learning, artificial intelligence and more.

About Elasticsearch Data Integration

Accessing and integrating live data from Elasticsearch has never been easier with CData. Customers rely on CData connectivity to:

- Access both the SQL endpoints and REST endpoints, optimizing connectivity and offering more options when it comes to reading and writing Elasticsearch data.

- Connect to virtually every Elasticsearch instance starting with v2.2 and Open Source Elasticsearch subscriptions.

- Always receive a relevance score for the query results without explicitly requiring the SCORE() function, simplifying access from 3rd party tools and easily seeing how the query results rank in text relevance.

- Search through multiple indices, relying on Elasticsearch to manage and process the query and results instead of the client machine.

Users frequently integrate Elasticsearch data with analytics tools such as Crystal Reports, Power BI, and Excel, and leverage our tools to enable a single, federated access layer to all of their data sources, including Elasticsearch.

For more information on CData's Elasticsearch solutions, check out our Knowledge Base article: CData Elasticsearch Driver Features & Differentiators.

Getting Started

Configure OneLake as a Replication Destination

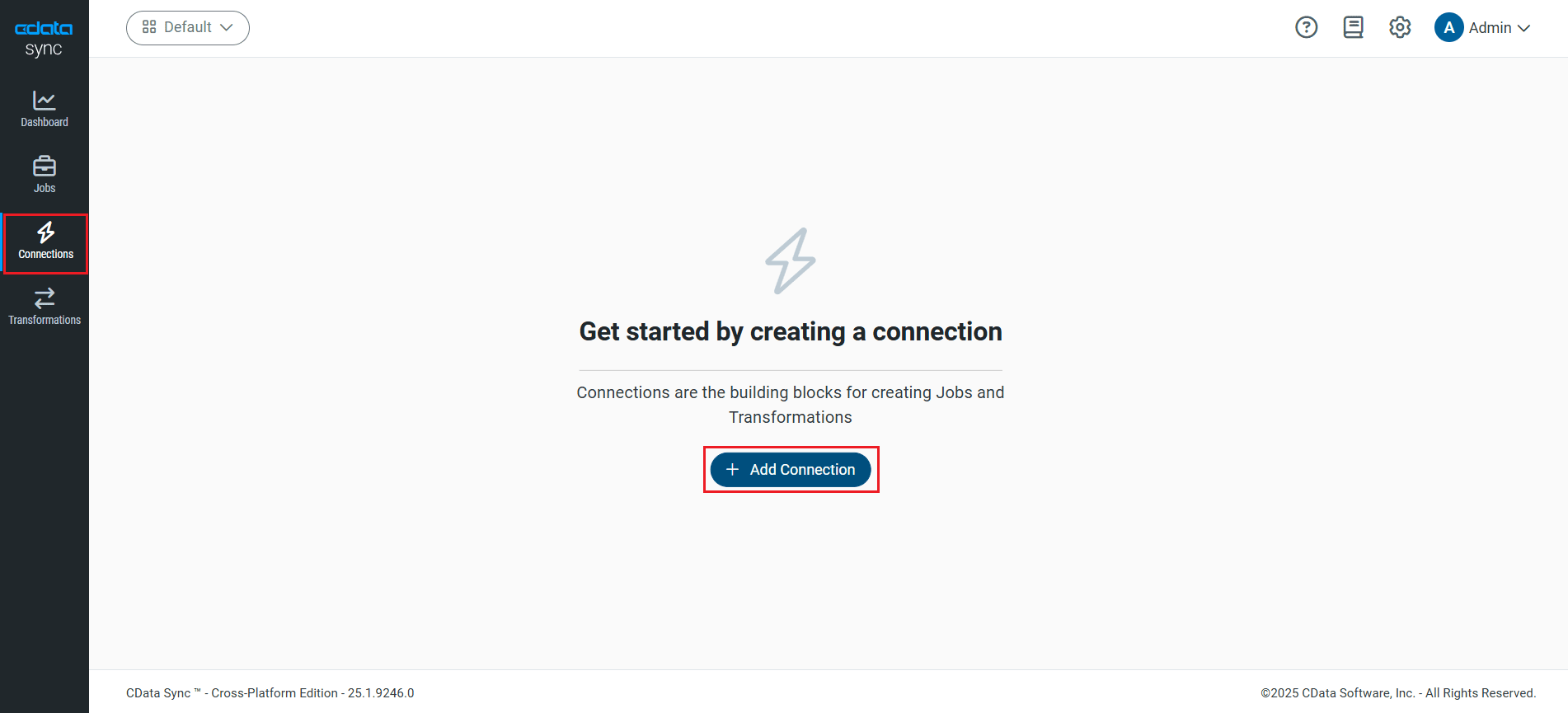

Using CData Sync, you can replicate Elasticsearch data to OneLake. To add a replication destination, navigate to the Connections tab.

- Click Add Connection.

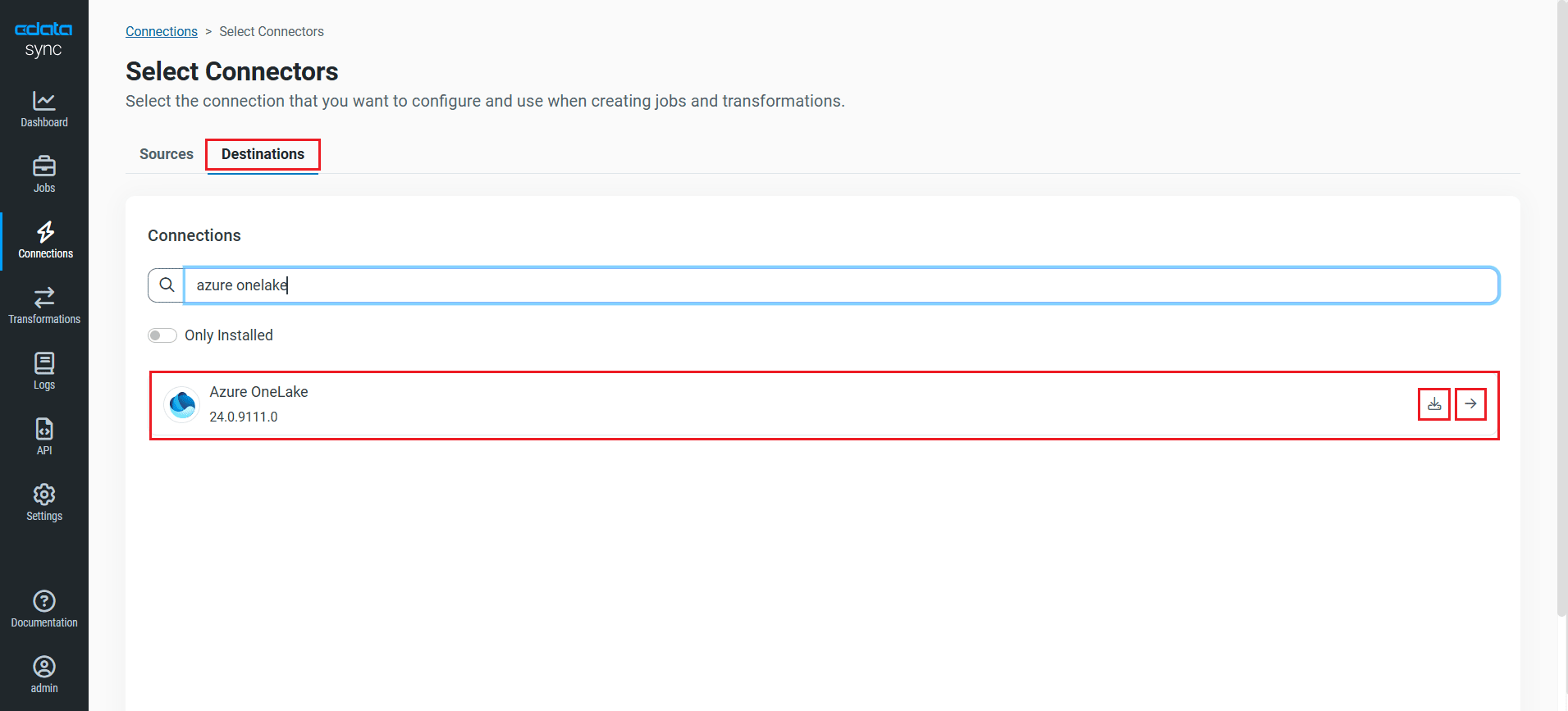

- Click Destinations tab and locate the Azure OneLake connector.

- Click the Configure Connection icon at the end of that row to open the New Connection page. If the Configure Connection icon is not available, click the Download Connector icon to install the OneLake connector. For more information about installing new connectors, see Connections in the Help documentation.

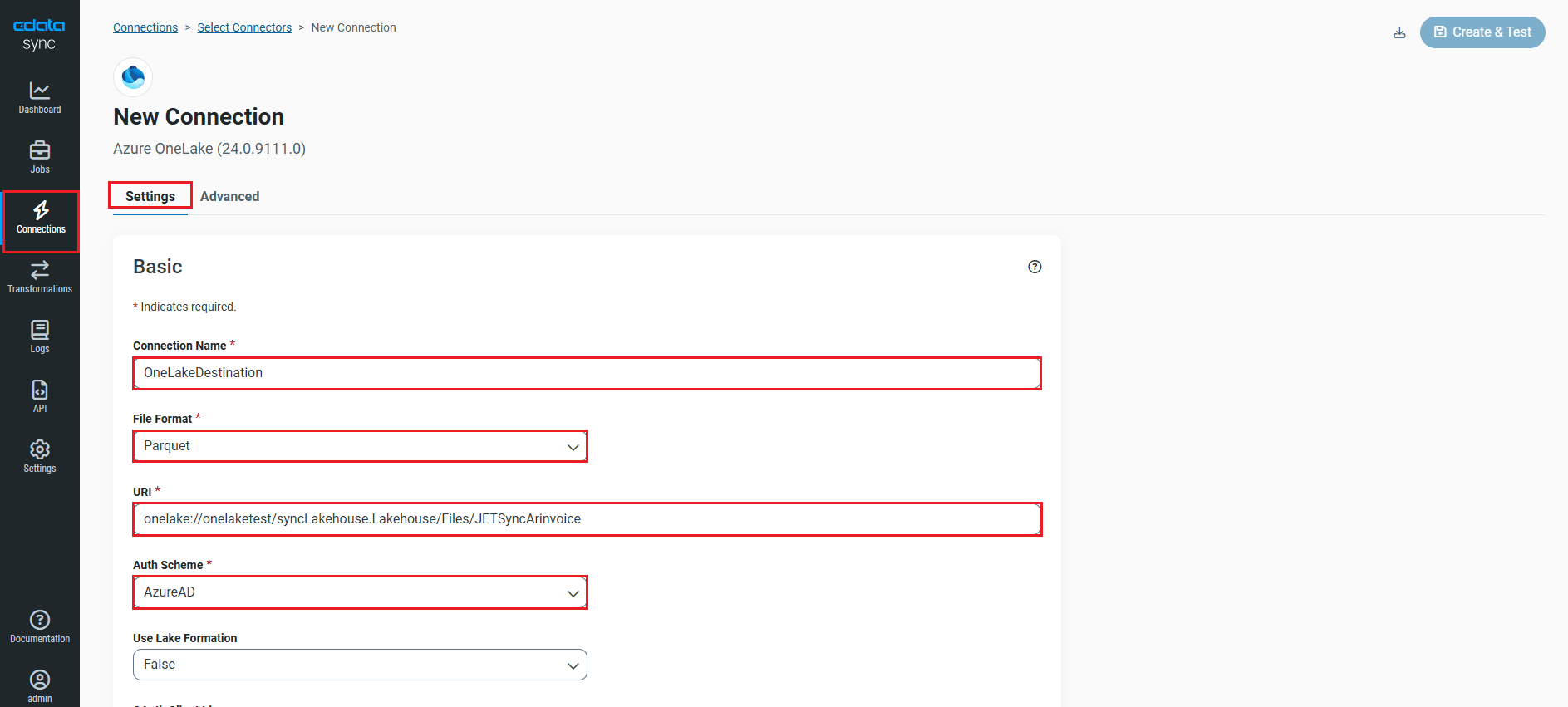

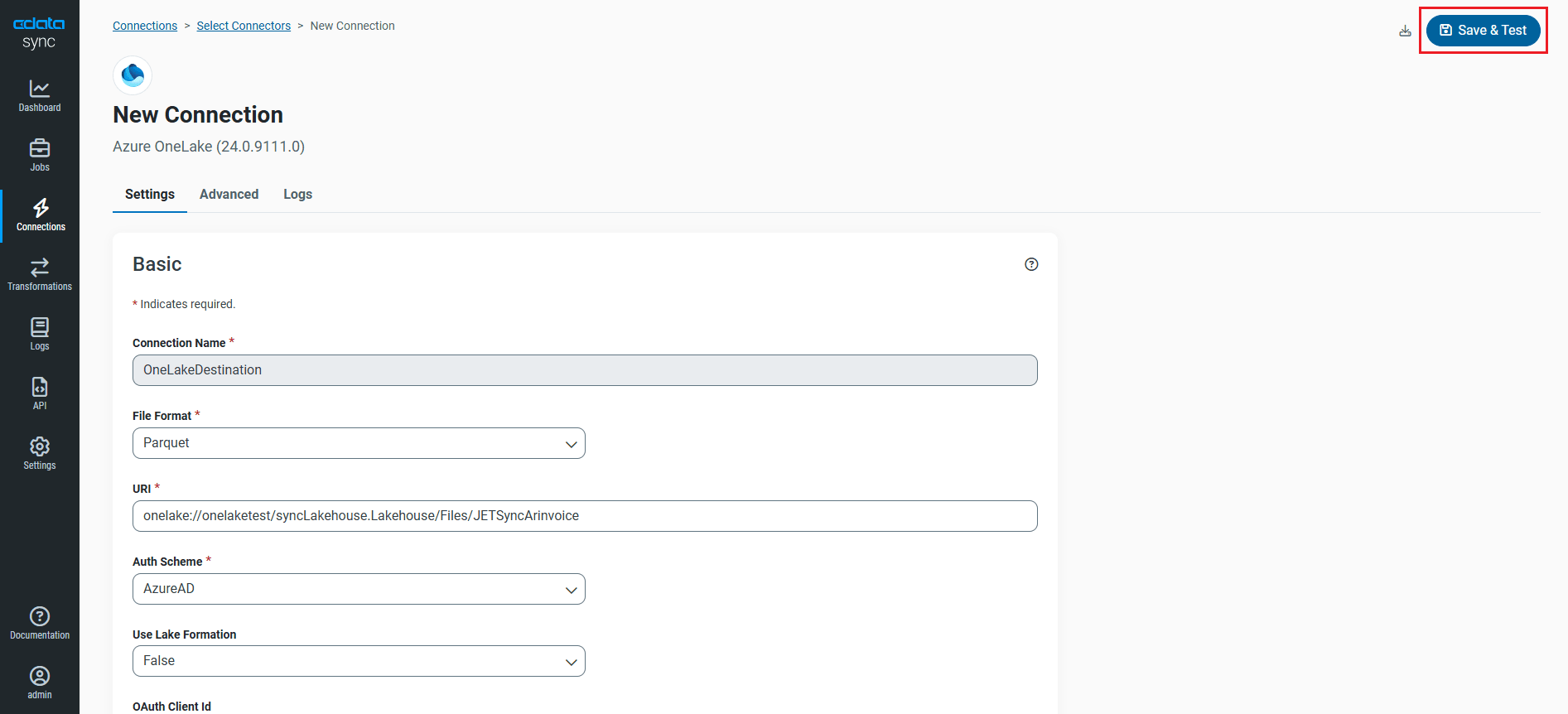

- After the connected is added, enter the following Basic connection properties under Settings to connect to OneLake:

- Connection Name: Enter a connection name of your choice.

- File Format: Select the file format that you want to use. Sync supports the CSV, PARQUET, and AVRO file formats.

- URI: Enter the path of the file system and folder that contains your files (for example, onelake://Workspace/Test.LakeHouse/Files/CustomFolder).

- Auth Scheme: To connect with an Azure Active Directory (AD) user account, select Azure AD for Auth Scheme. CData Sync provides an embedded OAuth application with which to connect so no additional properties are required.

- Data Model: Specify the data format to use while parsing the selected file format documents and generating the database metadata.

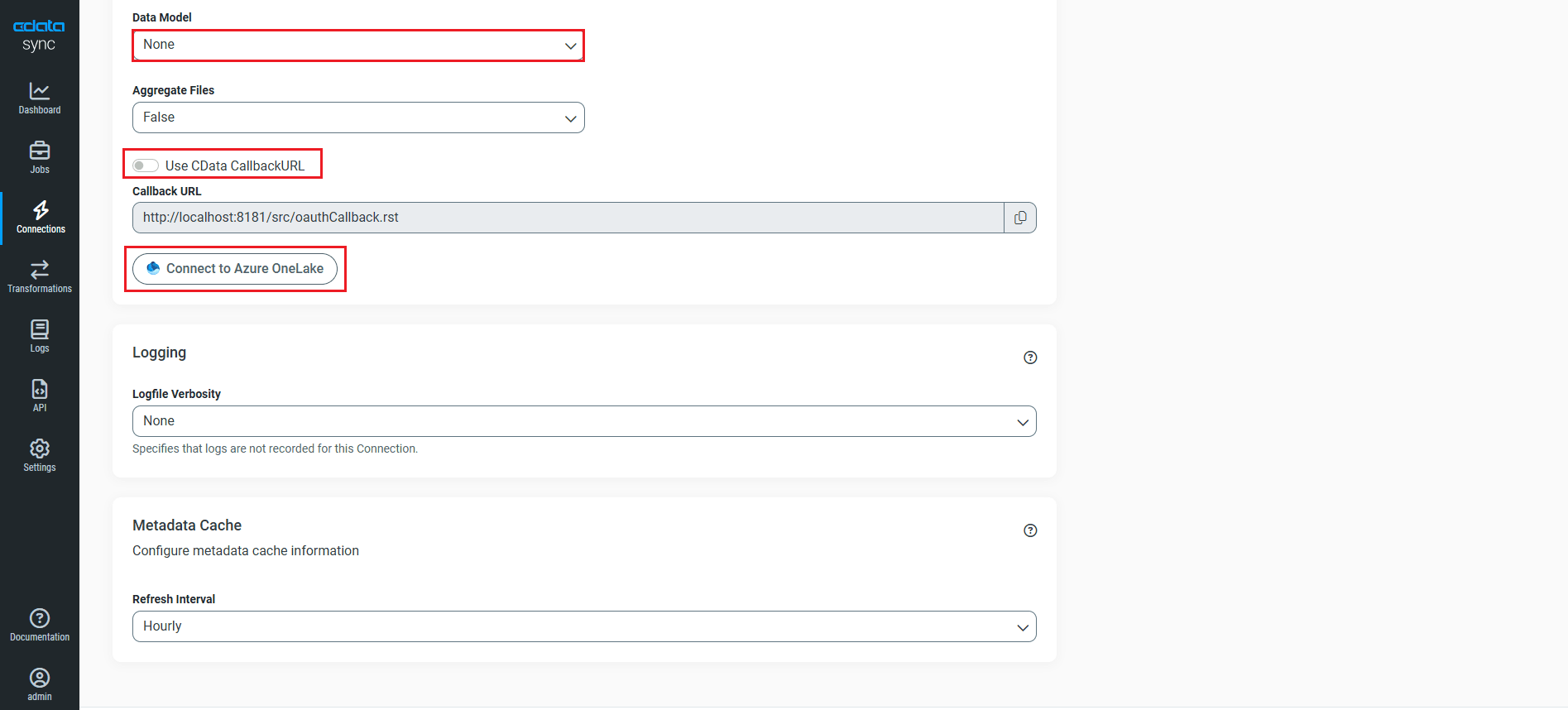

- If you are hosting CData Sync (locally or in your own cloud):

- Use CData CallbackURL: Disable the toggle.

- Callback URL: Enter the Callback URL.

- If you are using CData Sync Cloud, leave the Use CData CallbackURL toggle enabled.

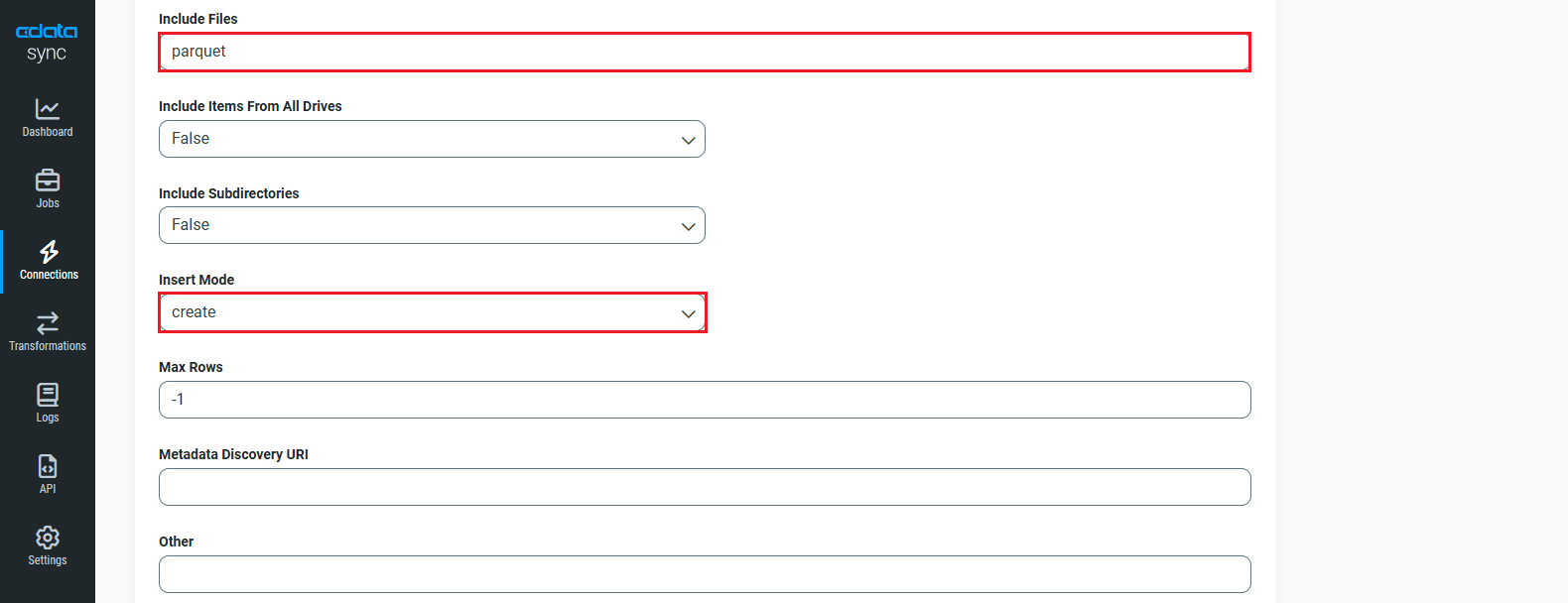

- Navigate to the Advanced tab and scroll down to the Miscellaneous section.

- In Include Files, enter the file format initially selected.

- Select Create from Insert Mode dropdown. The other Insert Mode options are Overwrite and Batch.

- Now, navigate back to Basic settings and click Connect to Azure OneLake.

- Once connected, click Create & Test to save the connection.

You are now connected to OneLake and can use it as both a source and a destination.

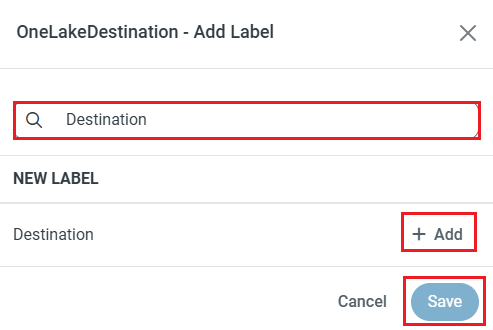

NOTE: You can use the Label feature to add a label for a source or a destination.

In this article, we will demonstrate how to load Elasticsearch data into OneLake and utilize it as a destination.

Configure the Elasticsearch Connection

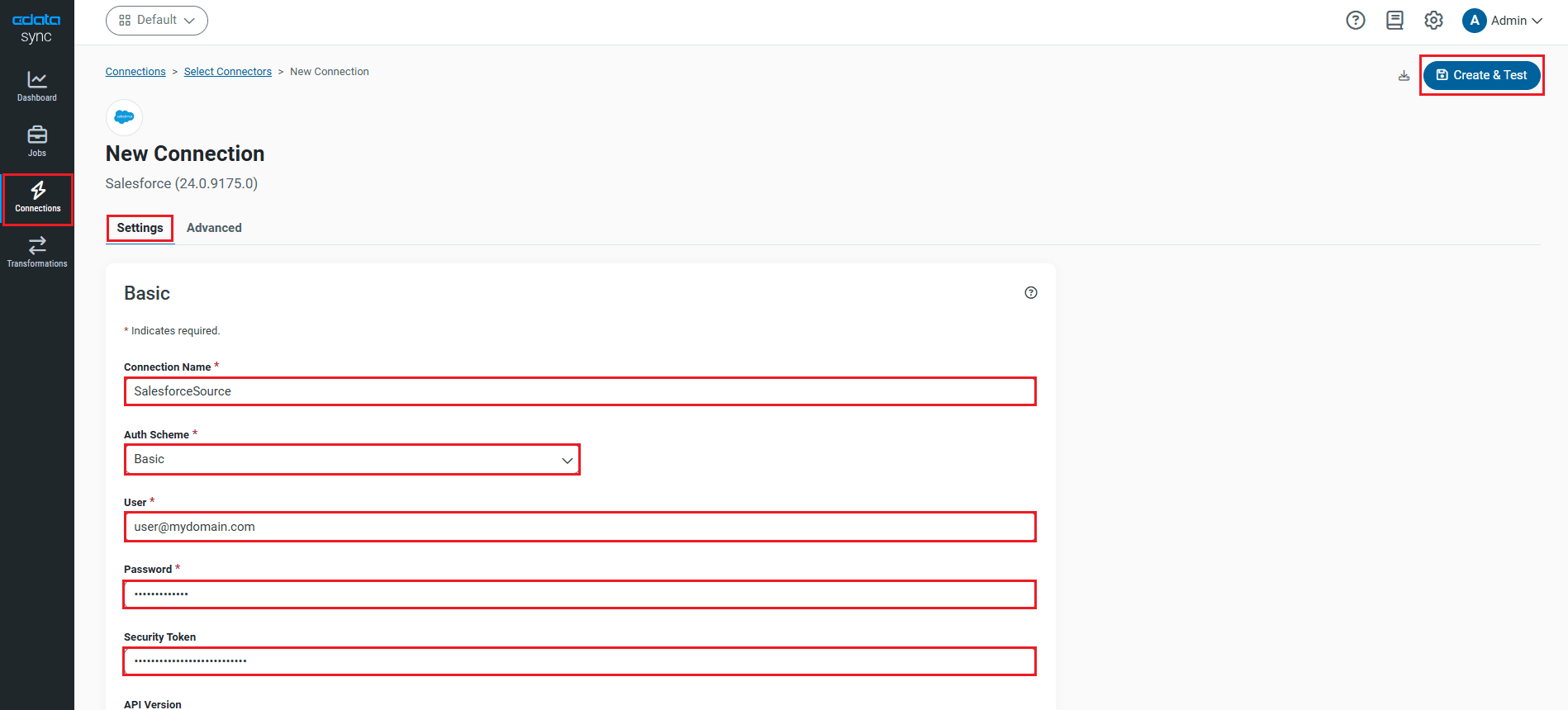

You can configure a connection to Elasticsearch from the Connections tab. To add a connection to your Elasticsearch account, navigate to the Connections tab.

- Click Add Connection.

- Select a source (Elasticsearch).

- Configure the connection properties.

Set the Server and Port connection properties to connect. To authenticate, set the User and Password properties, PKI (public key infrastructure) properties, or both. To use PKI, set the SSLClientCert, SSLClientCertType, SSLClientCertSubject, and SSLClientCertPassword properties.

The data provider uses X-Pack Security for TLS/SSL and authentication. To connect over TLS/SSL, prefix the Server value with 'https://'. Note: TLS/SSL and client authentication must be enabled on X-Pack to use PKI.

Once the data provider is connected, X-Pack will then perform user authentication and grant role permissions based on the realms you have configured.

- Click Connect to Elasticsearch to ensure that the connection is configured properly.

- Click Save & Test to save the changes.

Configure Replication Queries

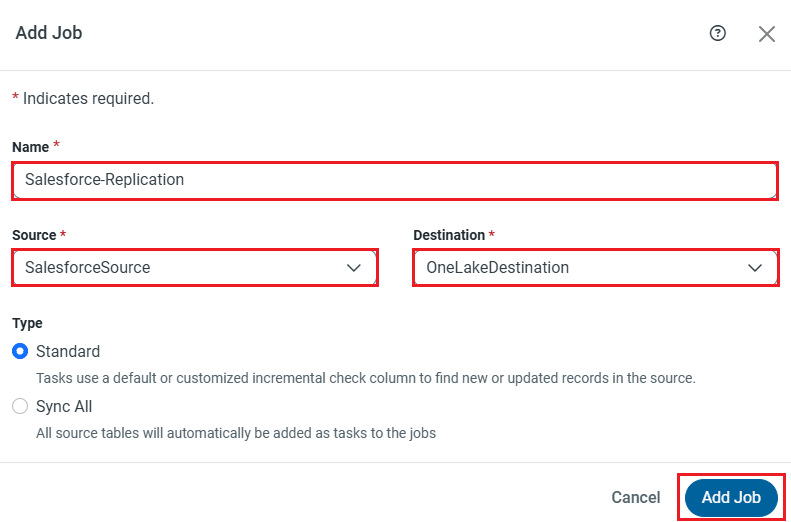

CData Sync enables you to control replication with a point-and-click interface and with SQL queries. For each replication you wish to configure, navigate to the Jobs tab and click Add Job. Select the Source and Destination for your replication.

Edit the Job

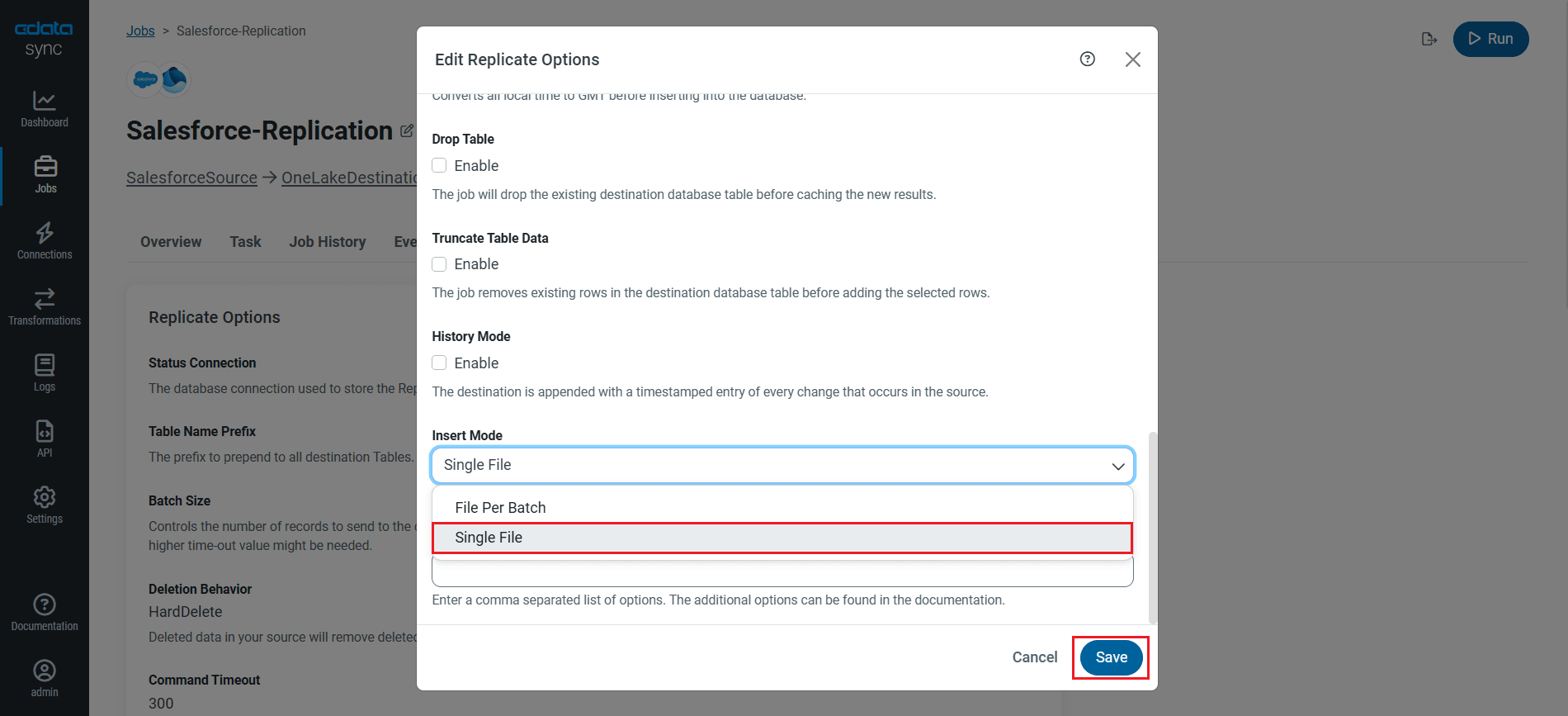

- In the Advanced tab of the Job, click Edit Replicate Options and select the Insert Mode as Single File from the dropdown (If the Insert Mode is selected as "Create" in the OneLake connector.)

- In "Batch" mode, you need to set the Insert Mode in Jobs to File Per Batch.

- In "Overwrite" mode, both Single File and File Per Batch work.

Replicate Entire Tables

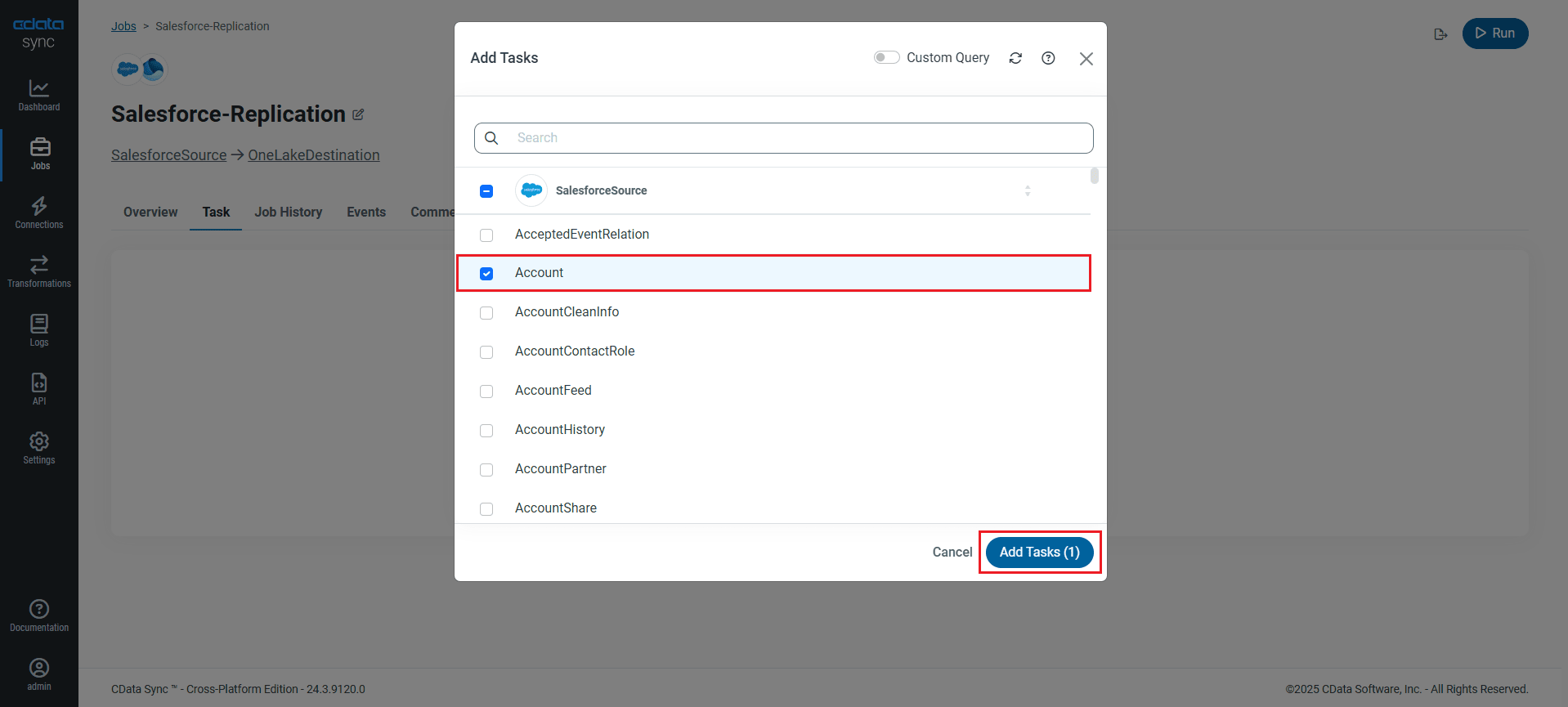

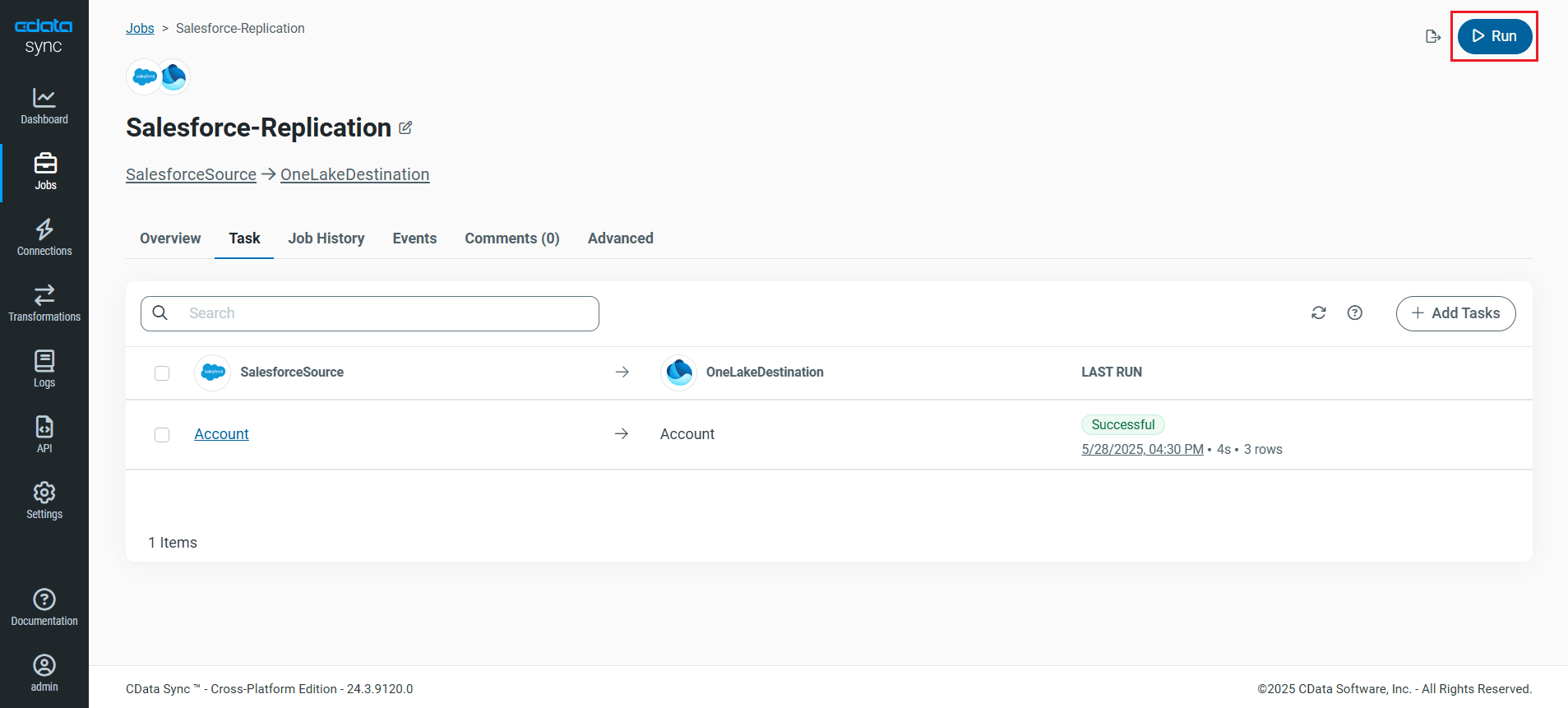

To replicate an entire table, navigate to the Task tab in the Job, click Add Tasks, choose the table(s) from the list of Elasticsearch tables you wish to replicate into OneLake, and click Add Tasks again.

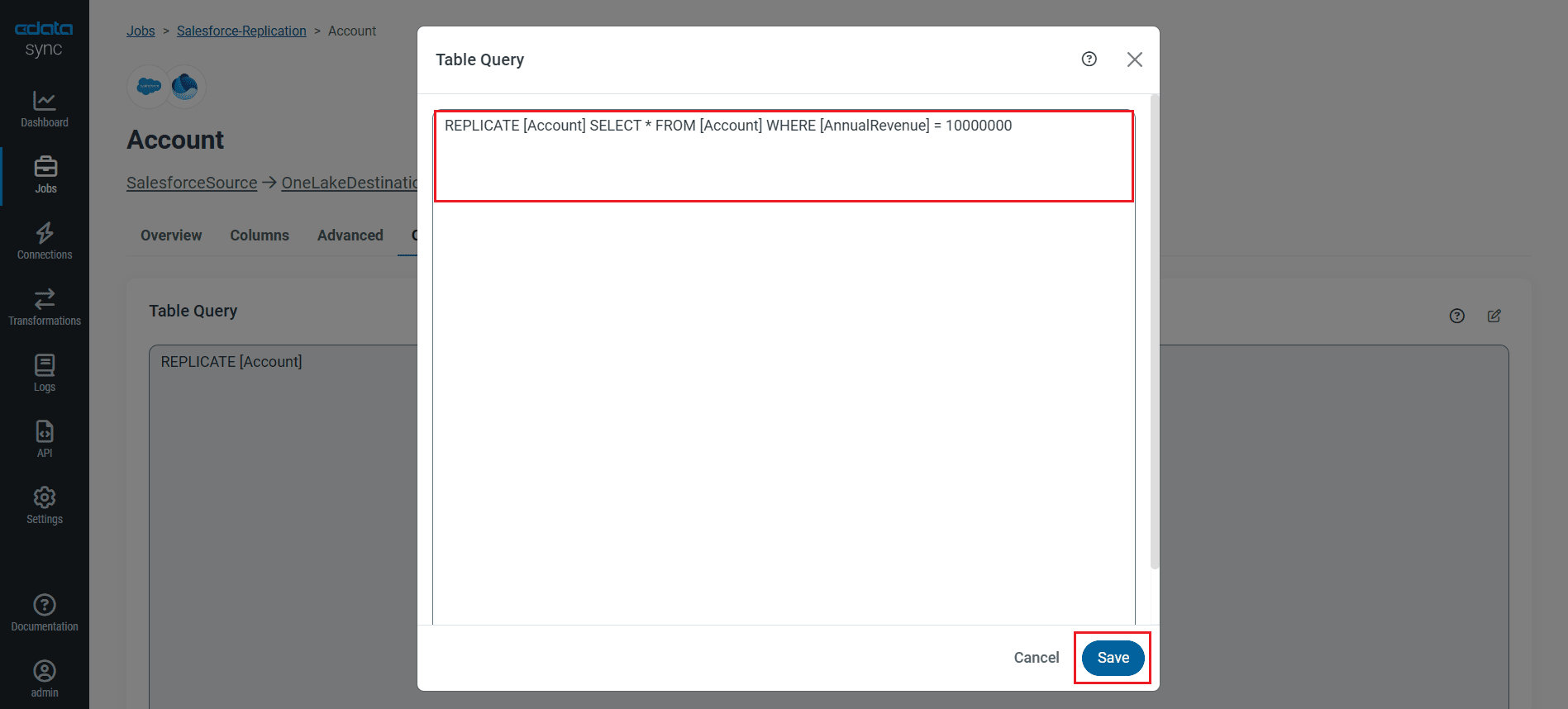

Customize Your Replication

You can use the Columns and Query tabs of a task to customize your replication. The Columns tab allows you to specify which columns to replicate, rename the columns at the destination, and even perform operations on the source data before replicating. The Query tab allows you to add filters, grouping, and sorting to the replication with the help of SQL queries.

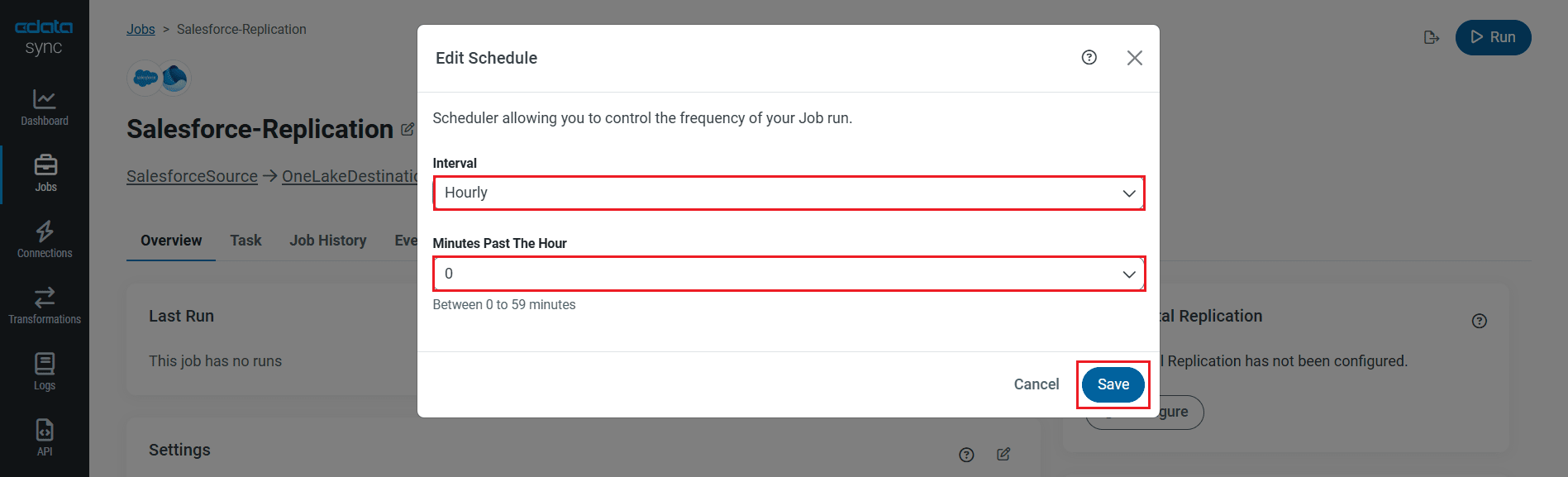

Schedule Your Replication

Select the Overview tab in the Job, and click Configure under Schedule. You can schedule a job to run automatically by configuring it to run at specified intervals, ranging from once every 10 minutes to once every month.

Once you have configured the replication job, click Save Changes. You can configure any number of jobs to manage the replication of your Elasticsearch data to OneLake.

Run the Replication Job

Once all the required configurations are made for the job, select the Elasticsearch table you wish to replicate and click Run. After the replication completes successfully, a notification appears, showing the time taken to run the job and the number of rows replicated.

Free Trial & More Information

Now that you have seen how to replicate Elasticsearch data into OneLake, visit our CData Sync page to explore more about CData Sync and download a free 30-day trial. Start consolidating your enterprise data today!

As always, our world-class Support Team is ready to answer any questions you may have.