Model Context Protocol (MCP) finally gives AI models a way to access the business data needed to make them really useful at work. CData MCP Servers have the depth and performance to make sure AI has access to all of the answers.

Try them now for free →Migrating data from Paylocity to Databricks using CData SSIS Components.

Easily push Paylocity data to Databricks using the CData SSIS Tasks for Paylocity and Databricks.

Databricks is a unified data analytics platform that allows organizations to easily process, analyze, and visualize large amounts of data. It combines data engineering, data science, and machine learning capabilities in a single platform, making it easier for teams to collaborate and derive insights from their data.

The CData SSIS Components enhance SQL Server Integration Services by enabling users to easily import and export data from various sources and destinations.

In this article, we explore the data type mapping considerations when exporting to Databricks and walk through how to migrate Paylocity data to Databricks using the CData SSIS Components for Paylocity and Databricks.

Data Type Mapping

| Databricks Schema | CData Schema |

|---|---|

|

int, integer, int32 |

int |

|

smallint, short, int16 |

smallint |

|

double, float, real |

float |

|

date |

date |

|

datetime, timestamp |

datetime |

|

time, timespan |

time |

|

string, varchar |

If length > 4000: nvarchar(max), Otherwise: nvarchar(length) |

|

long, int64, bigint |

bigint |

|

boolean, bool |

tinyint |

|

decimal, numeric |

decimal |

|

uuid |

nvarchar(length) |

|

binary, varbinary, longvarbinary |

binary(1000) or varbinary(max) after SQL Server 2000 |

Special Considerations

- String/VARCHAR: String columns from Databricks can map to different data types depending on the length of the column. If the column length exceeds 4000, then the column is mapped to nvarchar (max). Otherwise, the column is mapped to nvarchar (length).

- DECIMAL Databricks supports DECIMAL types up to 38 digits of precision, but any source column beyond that can cause load errors.

Prerequisites

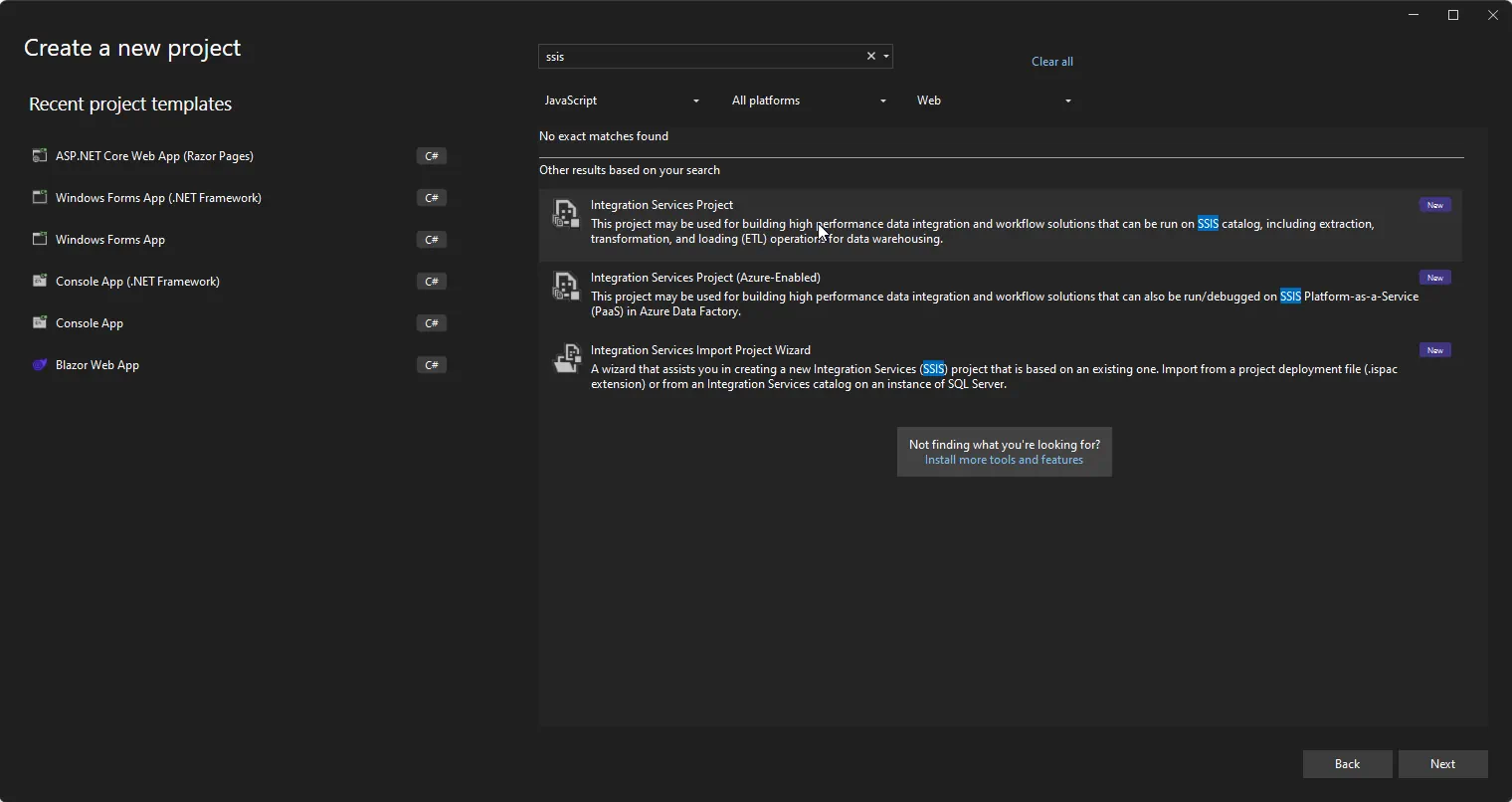

- Visual Studio 2022

- SQL Server Integration Services Projects extension for Visual Studio 2022

- CData SSIS Components for Databricks

- CData SSIS Components for Paylocity

Create the project and add components

-

Open Visual Studio and create a new Integration Services Project.

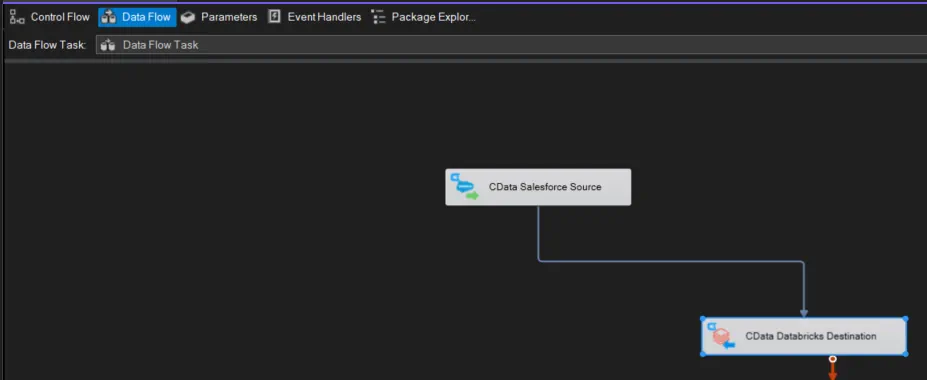

- Add a new Data Flow Task to the Control Flow screen and open the Data Flow Task.

-

Add a CData Paylocity Source control and a CData Databricks Destination control to the data flow task.

Configure the Paylocity source

Follow the steps below to specify properties required to connect to Paylocity.

-

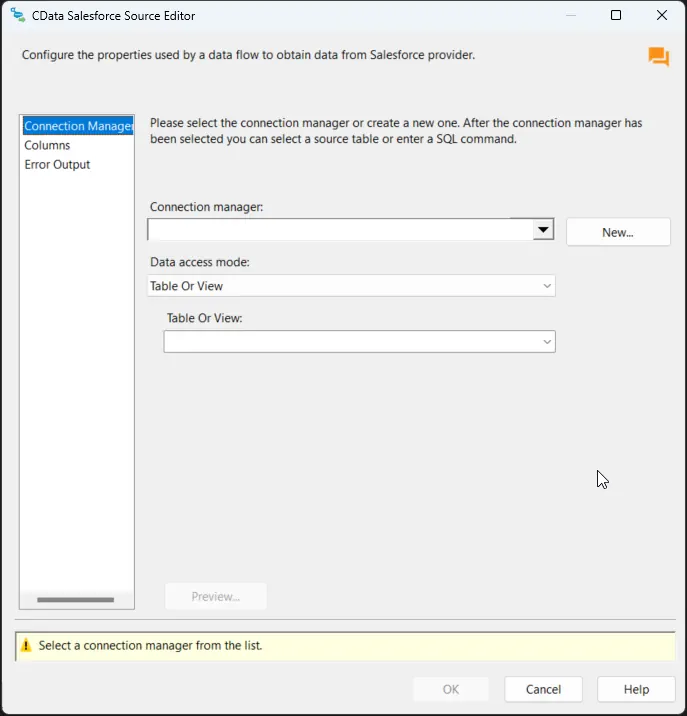

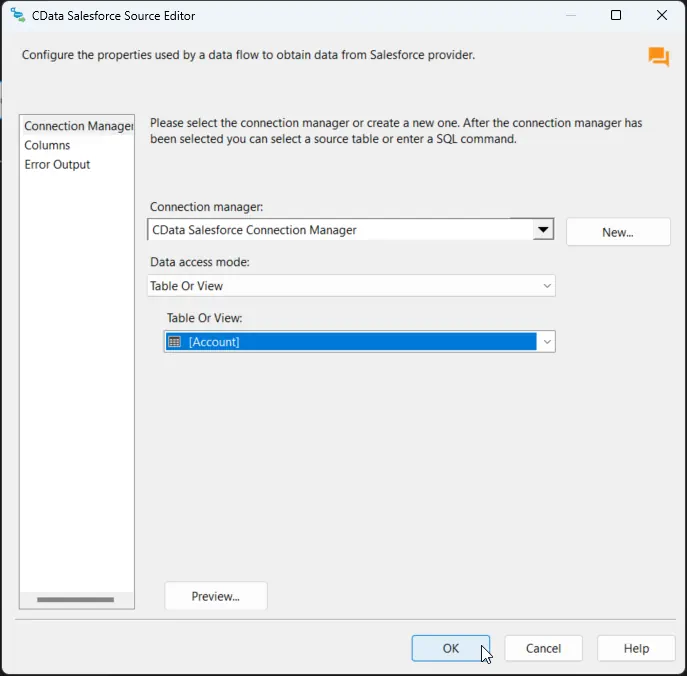

Double-click the CData Paylocity Source to open the source component editor and add a new connection.

-

In the CData Paylocity Connection Manager, configure the connection properties, then test and save the connection.

Set the following to establish a connection to Paylocity:

- RSAPublicKey: Set this to the RSA Key associated with your Paylocity, if the RSA Encryption is enabled in the Paylocity account.

This property is required for executing Insert and Update statements, and it is not required if the feature is disabled.

- UseSandbox: Set to true if you are using sandbox account.

- CustomFieldsCategory: Set this to the Customfields category. This is required when IncludeCustomFields is set to true. The default value for this property is PayrollAndHR.

- Key: The AES symmetric key(base 64 encoded) encrypted with the Paylocity Public Key. It is the key used to encrypt the content.

Paylocity will decrypt the AES key using RSA decryption.

It is an optional property if the IV value not provided, The driver will generate a key internally. - IV: The AES IV (base 64 encoded) used when encrypting the content. It is an optional property if the Key value not provided, The driver will generate an IV internally.

Connect Using OAuth Authentication

You must use OAuth to authenticate with Paylocity. OAuth requires the authenticating user to interact with Paylocity using the browser. For more information, refer to the OAuth section in the Help documentation.

The Pay Entry API

The Pay Entry API is completely separate from the rest of the Paylocity API. It uses a separate Client ID and Secret, and must be explicitly requested from Paylocity for access to be granted for an account. The Pay Entry API allows you to automatically submit payroll information for individual employees, and little else. Due to the extremely limited nature of what is offered by the Pay Entry API, we have elected not to give it a separate schema, but it may be enabled via the UsePayEntryAPI connection property.

Please be aware that when setting UsePayEntryAPI to true, you may only use the CreatePayEntryImportBatch & MergePayEntryImportBatchgtable stored procedures, the InputTimeEntry table, and the OAuth stored procedures. Attempts to use other features of the product will result in an error. You must also store your OAuthAccessToken separately, which often means setting a different OAuthSettingsLocation when using this connection property.

- RSAPublicKey: Set this to the RSA Key associated with your Paylocity, if the RSA Encryption is enabled in the Paylocity account.

-

After saving the connection, select "Table or view" and select the table or view to export into Databricks, then close the CData Paylocity Source Editor.

Configure the Databricks destination

With the Paylocity Source configured, we can configure the Databricks connection and map the columns.

-

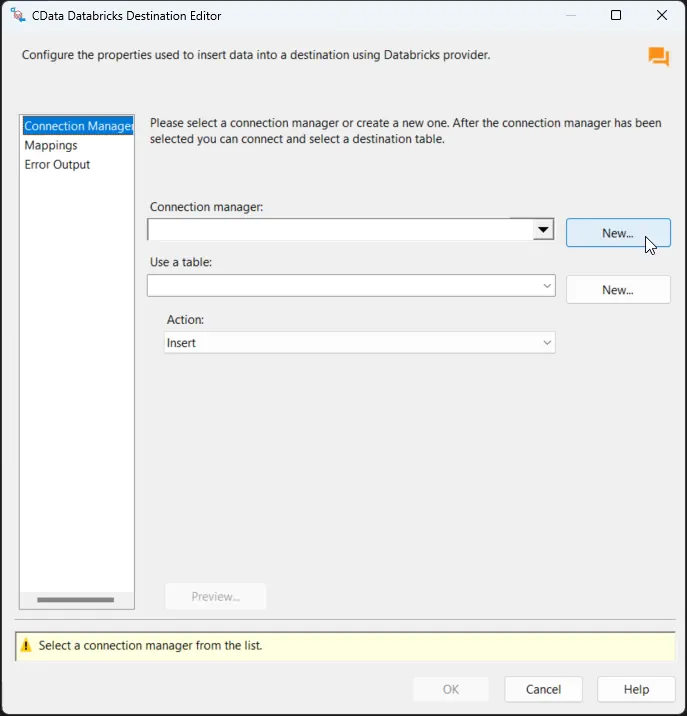

Double-click the CData Databricks Destination to open the destination component editor and add a new connection.

-

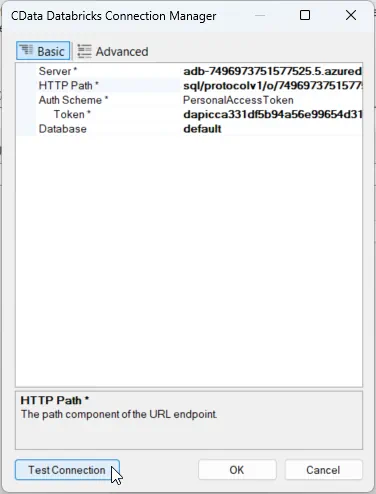

In the CData Databricks Connection Manager, configure the connection properties, then test and save the connection. To connect to a Databricks cluster, set the properties as described below.

Note: The needed values can be found in your Databricks instance by navigating to Clusters, selecting the desired cluster, and selecting the JDBC/ODBC tab under Advanced Options.

- Server: Set to the Server Hostname of your Databricks cluster.

- HTTPPath: Set to the HTTP Path of your Databricks cluster.

- Token: Set to your personal access token (this value can be obtained by navigating to the User Settings page of your Databricks instance and selecting the Access Tokens tab).

Other helpful connection properties

- QueryPassthrough: When this is set to True, queries are passed through directly to Databricks.

- ConvertDateTimetoGMT: When this is set to True, the components will convert date-time values to GMT, instead of the local time of the machine.

- UseUploadApi: Setting this property to true will improve performance if there is a large amount of data in a Bulk INSERT operation.

- UseCloudFetch: This option specifies whether to use CloudFetch to improve query efficiency when the table contains over one million entries.

-

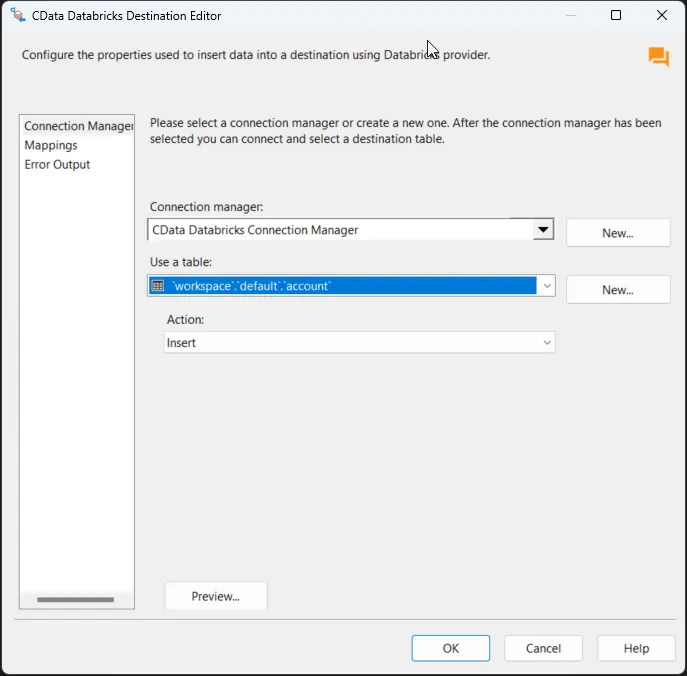

After saving the connection, select a table in the Use a Table menu and in the Action menu, select Insert.

-

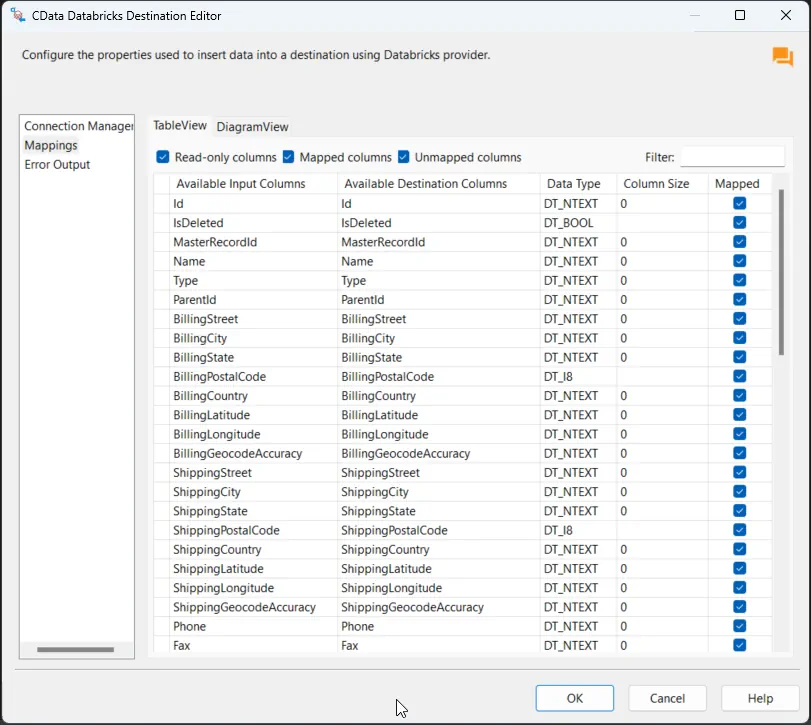

On the Column Mappings tab, configure the mappings from the input columns to the destination columns.

Run the project

You can now run the project. After the SSIS Task has finished executing, data from your SQL table will be exported to the chosen table.